Instagram reacts to exposé on promoting pedophile accounts on platform

Following an exposé by The Wall Street Journal, it has been revealed that Instagram’s algorithm facilitates the connection and promotion of a vast network of accounts openly involved in the commission and purchase of underage sexual content.

The discovery was made during a joint investigation by researchers from Stanford University, the University of Massachusetts Amherst and The Wall Street Journal.

The Meta-owned platform, which operates Instagram, has been accused of directing users to child sex content through its community-building systems, despite claiming to be improving internal controls. Accounts engaged in such illegal activity are shamelessly promoted using explicit hashtags such as #pedowhore, #preteensex and #pedobait.

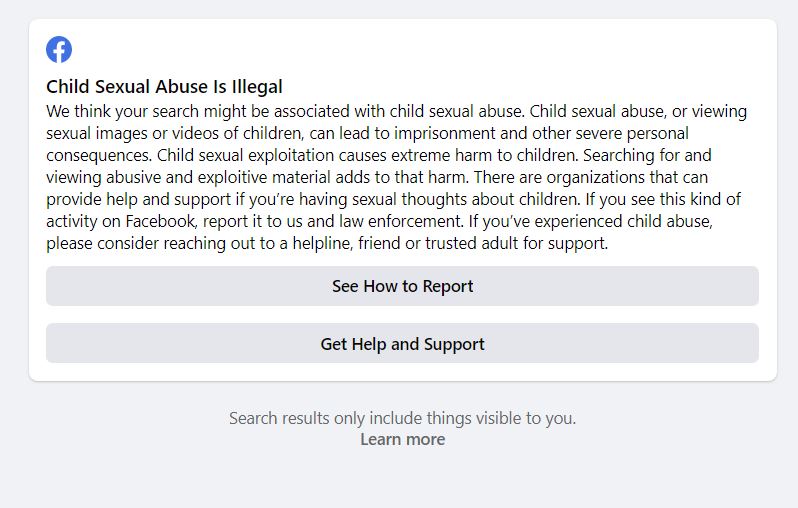

In response to this damning expose, the platform has added warnings when searching for these offensive hashtags and accounts.

Also read: Rayvanny defends wife in explosive Instagram feud with ex-Paula Kajala

Nairobi News conducted a spot check on the social media site and when searching for the aforementioned hashtags, a message appeared: “#pedowhore: Sexual abuse of children is illegal. We believe your search may be related to child sexual abuse. Engaging in child sexual abuse or consuming explicit content involving children can result in prison sentences and serious personal consequences. Such abuse causes extreme harm to children and searching for or viewing such material only adds to their suffering. For confidential support or information on how to report inappropriate content, please visit our Help Centre. IWIP – Get Resources”.

Also read: I’m not fine: Singer Rosa Ree breaks down in viral Instagram post

Warnings for inappropriate hashtags have also been introduced on the Facebook platform, mirroring the measures taken on Instagram.

The TikTok platform has seen the emergence of the #Pedobait trend, where users dress up as young girls and create sensual content. However, searching for other inappropriate hashtags on TikTok now triggers warnings.

In contrast, Twitter does not display inappropriate content when searching for these hashtags.

According to the WSJ article, when researchers created a test account on Instagram and viewed content shared by these networks, they were immediately recommended more accounts to follow. The report claims that following just a few of these recommendations made the test account inundated with content that sexualised children.

Also read: Kenyans to pay for Facebook and Instagram verification

Variety reported that the Stanford Internet Observatory research team discovered 405 sellers of “self-generated” child sex material (accounts allegedly run by minors themselves) using hashtags associated with underage sex. The article even mentioned that, for the right price, children were available for in-person “meet-ups”.

Meta admitted to the WSJ that it had failed to act on these reports and said it was reviewing its internal processes. Alex Stamos, head of the Stanford Internet Observatory and former chief security officer at Meta, expressed concern that such a significant network could be found by a team of three academics with limited access, stating that it should raise alarms at Meta. Stamos emphasised the need for the company to reinvest in human investigators.

The Stanford researchers found 128 accounts offering to sell child sexual abuse material on Twitter, less than a third of the number they found on Instagram. However, Twitter appears to recommend these accounts to a lesser extent than Instagram and promptly removes such content and accounts.

David Thiel, chief technologist at the Stanford Internet Observatory, emphasised the importance of implementing safeguards to ensure nominal safety on a rapidly growing platform, highlighting Instagram’s failure to do so.

Also read: Bromance is dead: Joho deletes Governor Abdulswamad Shariff photos from Instagram